Hi, @goldpiggy. Thank you for your answer.

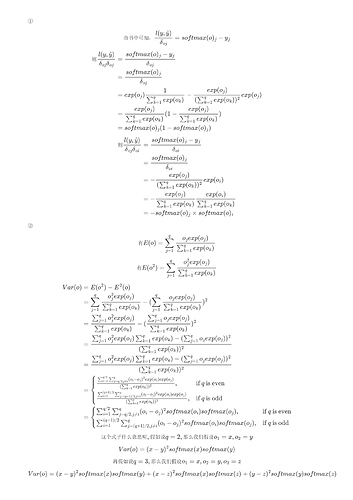

I think we are talking about different things here. You are talking about the derivatives of o_i w.r.t o_j or the other way around (which are all zero because, as you mention, they are not a function of each other), and I’m talking about the derivatives of the loss function, which depends on all variables o_k (with k = 1,2...,q, because we are assuming that the dimension of 𝐨 is q). We both agree that the gradient of the loss function exists, and this gradient is a q-dimensional vector whose elements are the first-order partial derivatives of the loss function w.r.t. each of the independent variables o_k. Now, each of these derivatives is also a function of all variables o_k, and therefore can be differentiated again to obtain the second-order derivatives, as I explained in my first post. But this is not a problem at all… I understand how to compute these derivatives and I also managed to write a script that computes the Hessian matrix using torch.autograd.grad.

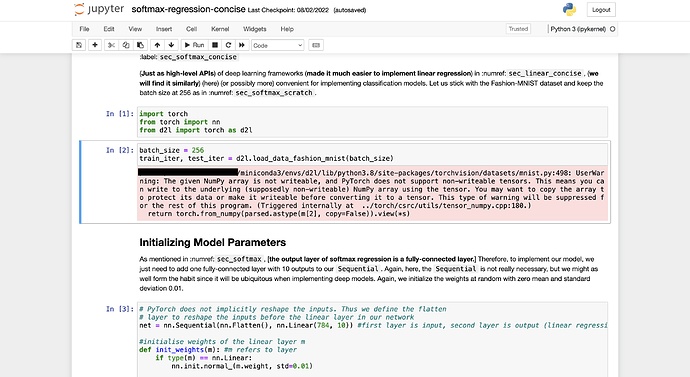

My question is related to Exercise 1 of this section of the book:

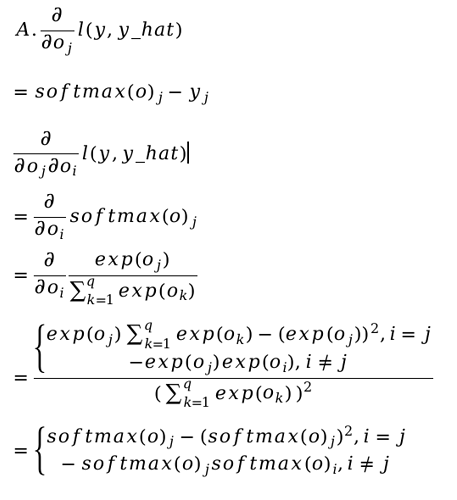

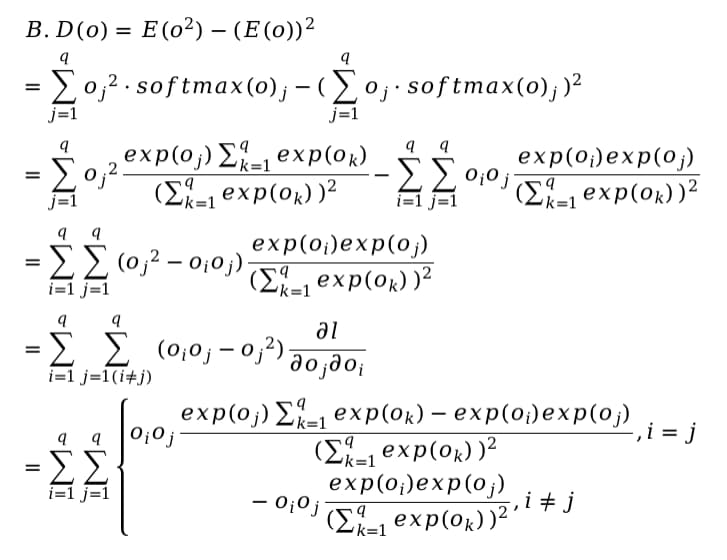

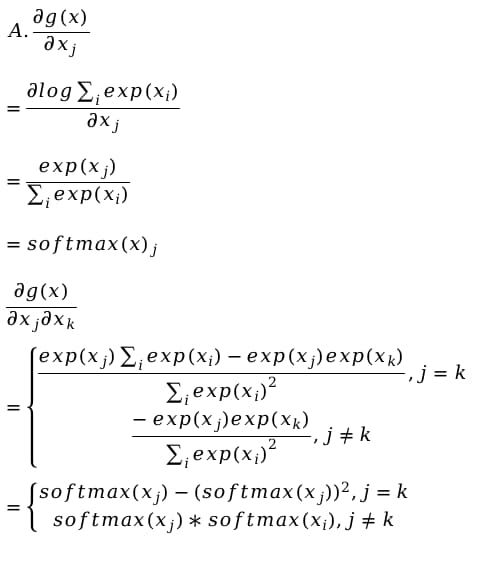

“Compute the variance of the distribution given by softmax(𝐨) and show that it matches the second derivative of the loss function.”

As I mentioned before, it is not clear to me what do you mean here by “the second derivative” of the loss function. This “second derivative” should be a real number, because the variance V of the distribution is a real number, and we are trying to show that these quantities are equal. But there are q^2 second-order partial derivatives, so what are we supposed to compare with the variance of the distribution? One of these derivatives? Some function of them?

Thank you in advance for your answer!