@goldpiggy,

I can’t understand too.

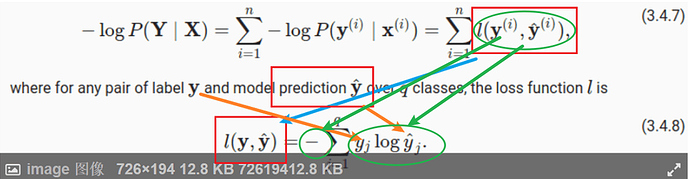

Hi @Gavin, great question. A simple answer is:

For more details, please check 22.7. Maximum Likelihood — Dive into Deep Learning 1.0.3 documentation

@goldpiggy

The simple answer seems to be Tautology.

I have read URL you give.

But I think it didn’t solve this question.

I can’t find anything in it.

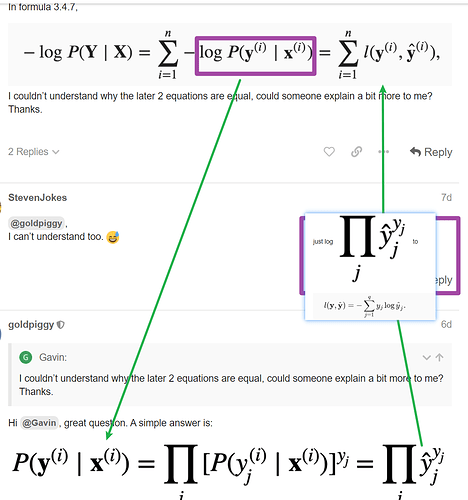

Hello. I am still not able to understand clearly how these 2 equations are related. Can you please explain, how for a particular observation i, the probability y given x is related to the entropy definition overall classes?

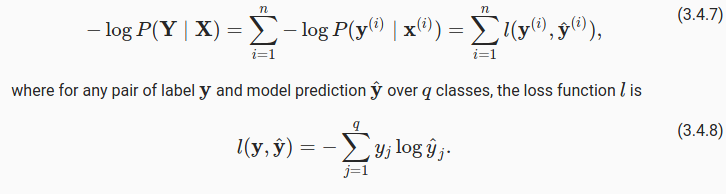

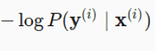

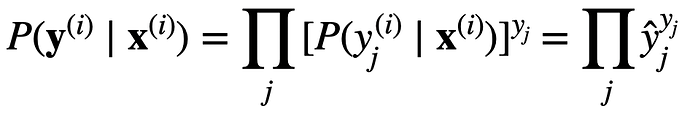

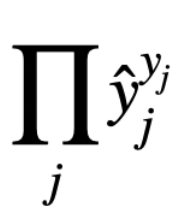

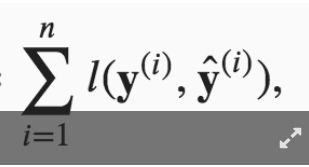

Thank you for your response. My question was more specifically why

is same as

is same as

l(y,y_hat)

Is this because y when 1-hot encoded has only single position with 1 and hence when we sum up the y * log(y_hat) over the entire class, we are left with the probability y_hat corresponding to true y. Please advise.

@Abinash_Sahu

l (y,y _ hat)

Cross entropy loss

Only one type of these losses we often use.

https://ml-cheatsheet.readthedocs.io/en/latest/loss_functions.html

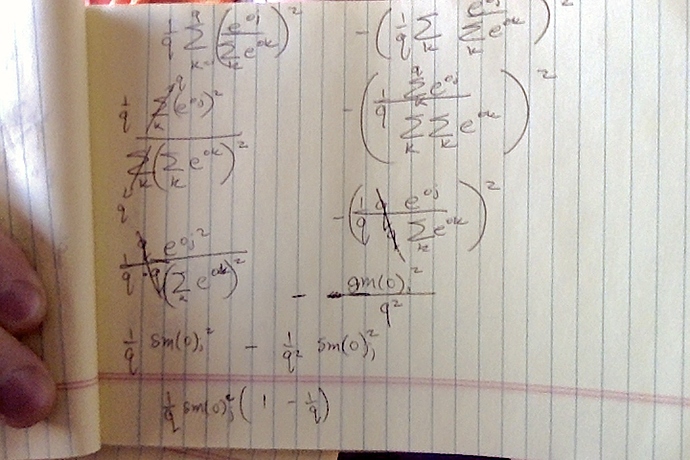

Q1.2. Compute the variance of the distribution given by softmax(𝐨)softmax(o) and show that it matches the second derivative computed above.

Can someone point me in the right direction? I tried to use Var[𝑋]=𝐸[(𝑋−𝐸[𝑋])^2]=𝐸[𝑋^2]−𝐸[𝑋]^2 to find the variance but I ended up having the term 1/q^2… it doesn’t look like the second derivative from Q1.1.

Thanks!

It appears that there is a reference that remained unresolved:

:eqref: eq_l_cross_entropy

in 3.4.5.3

to keep unified form,should the yj in later two equations should have an upper right mark (i) ?

I cannot understand the equation in 3.4.9

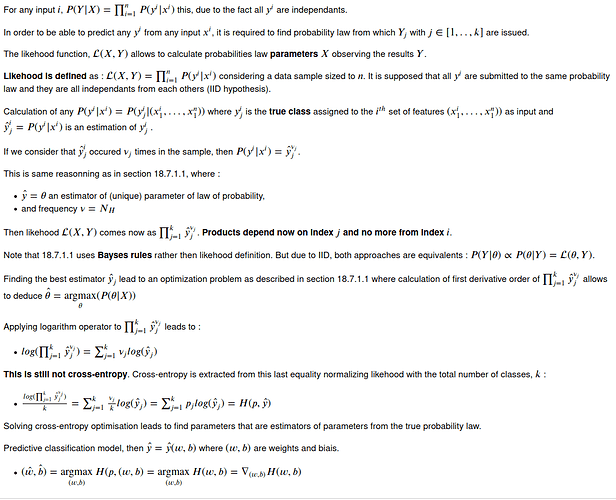

I may have an explanation considering equivalence between log likehood and cross-entropy.

Looks like you have der(E[X]^2), but what about E[X^2]? Recall:

Var[X] = E[X^2] - E[X]^2

?

?