If we can fully use the past experience to guide our action in future, then how similar of past and future is the next question that we should take attention to.

In my understanding,

over-fitting problems focus more on difference of past and future, while

under-fitting on similarity.

In exercise 1:

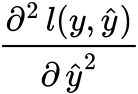

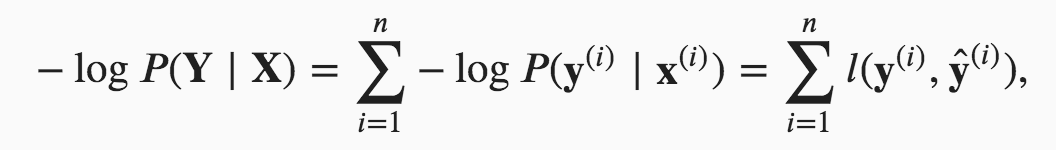

- Compute the second derivative of the cross-entropy loss 𝑙(𝐲,𝐲̂) for the softmax.

Is that means

?And where can I find exercises answer?

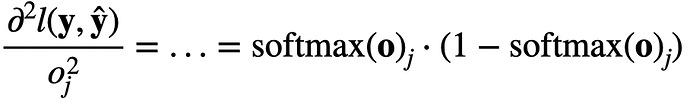

Hi @sheey, the second derivative will be:

$\frac{\partial^2 l(\mathbf{y}, \hat{\mathbf{y}})}{{{o_j^2}}} = … = \mathrm{softmax}(\mathbf{o})_j \cdot (1- \mathrm{softmax}(\mathbf{o})_j)$

i.e.,

Sorry we currently don’t provide the solutions. But feel free to ask question at the discussion forum

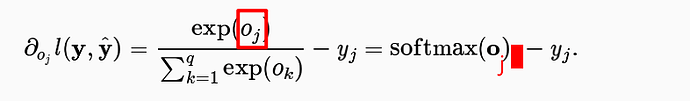

We only need to calculate the the derivative of softmax(o)_j (j is in or out?) to get the second derivative of the cross-entropy loss 𝑙(𝐲,𝐲̂) ?

I noticed that the second derivative of the cross-entropy loss 𝑙(𝐲,𝐲̂) is exactly the derivative of softmax(o)_j .

The derivative of y_j is 0 ? y_j is 1 or 0? j represents the label?

Hi @StevenJokes, good question! Actually, $j$ should be outside, since we first calculate softmax of the vector $o$, then take its j’s component.

Yes. I don’t fully understand your question though.

When we calculate the derivative of $y_j$ is 0, does it mean that we think $y_j$ has no relationship about $o_j$.

Hi @StevenJokes, $y_j$ is the real label while $o_j$ is the target, i.e. $y_j$ is not a function of $o_j$.

How do we judge whether $a$ is a function of $b$ or not?

Or we just judge by that we haven’t defined it before, rather than whether $a$ has a relationship with $b$ in reality or not.

I think it is a function in reality. But newton’s calculus can’t calculate its derivative, just because the function is discrete.

Please check https://github.com/d2l-ai/d2l-en/issues/1141 quickly, I think it maybe makes all eval wrong.

How do we judge whether $a$ is a function of $b$ or not?

Or we just judge by that we haven’t defined it before, rather than whether $a$ has a relationship with $b$ in reality or not.

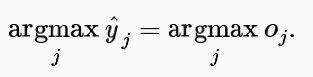

Hi @StevenJokes, $y$ is the true label, while $\hat{y}$ is the estimated label. Hence $\hat{y}$ is a function of $o$, while $y$ is not.

I already have understand $y_j$ is the true label, such as one-hot. But the diverce of our thinking is that I think the true label has a certain relationship with $o_j$, so I think $y_j$ is also $o_j$'s function. When we get same $o_j$, we get only one and $y_j$. Doesn’t it conform the defination of function.

But the function is discrete.

As we saw in “Freshness and Distribution Shift”, if production data is different from the data a model was trained on, a model may struggle to perform. To help with this, you should check the inputs to your pipeline.

In 10. Build Safeguards for Models - Building Machine Learning Powered Applications [Book]

Although softmax is a nonlinear function, the outputs of softmax regression are still determined by an affine transformation of input features; thus, softmax regression is a linear model.

Can anyone explain this why is it so? because when we say that a model is linear model , then it means model is linear in the parameter but in softmax regression , we are applying softmax function which is non linear so our model parameter will become non linear.

Just a statistical speaking!

exercise 1.1

apparrently i copied the answer above, 1.2 the variance is a vector and the j-th element is exactly the form of above which is softmax(0)_j(1-softmax(0)_j)exercise 3.3 use the squeeze theorem and it’s easy to prove

3.4 softmin could be softmin(-x)? i dont know

3.5 pass (too hard to type on the computer)