http://d2l.ai/chapter_recurrent-neural-networks/sequence.html

Greetings.

Thanks for making this book available.

In the toy example 8.1.2, when creating the dataset, I was wondering if it was normal for both the train set and the test set to be the same. Namely:

train_iter = d2l.load_array((features[:n_train], labels[:n_train]),

batch_size, is_train=True)

test_iter = d2l.load_array((features[:n_train], labels[:n_train]),

batch_size, is_train=False)

For test_iter, I would have expected something like:

test_iter = d2l.load_array((features[n_train:], labels[n_train:]),

batch_size, is_train=False)

Thanks for your time.

I see. I did not get that far down yet haha.

8.1.2. A Toy Example

features = d2l.zeros((T-tau, tau))

AttributeError : module ‘d2l.torch’ has no attribute ‘zeros’

Then I search http://preview.d2l.ai/d2l-en/PR-1077/search.html?q=d2l.zeros&check_keywords=yes&area=default

No source code:

http://preview.d2l.ai/d2l-en/PR-1077/chapter_appendix-tools-for-deep-learning/d2l.html?highlight=d2l%20zeros#d2l.torch.zeros

I can use ``features = d2l.torch.zeros((T-tau, tau))` to replace now, and try to code next time!

An hour to debug!

An hour to debug!

for i in range(tau):

features[:, i] = x[i: i + T - tau - max_steps + 1].T

What’s the purpose of .T at the end of the line above? It seems making no difference

I can’t agree more. Transposing a 1-rank tensor returns exactly itself.

Also this code

for i in range(n_train + tau, T):

multistep_preds[i] = d2l.reshape(net(

multistep_preds[i - tau: i].reshape(1, -1)), 1)

can be simply written as

for i in range(n_train + tau, T):

multistep_preds[i] = net(multistep_preds[i - tau: i])I agree. Fixing: https://github.com/d2l-ai/d2l-en/pull/1542

Next time you can PR first: http://preview.d2l.ai/d2l-en/master/chapter_appendix-tools-for-deep-learning/contributing.html

I couldn’t help but notice the similarities between the latent autoregressive model and hidden Markov models. The difference being that in the case of latent autoregressive model the hidden sequence h_t might change over time t and in the case of Hidden markov models the hidden sequence h_t remains the same for all t. Am I correct in assuming this?

Hi everybody,

I have a question about math. In particular what does the sum on x_t in eqution 8.1.4 mean?

Is that the sum over all the possible state x_t? But that does not make a lot of sense to me, because if I have observed x_(t+1) there is just one possible x_t.

Could someone help me in understanding that?

Thanks a lot!

Yes, it’s the sum over all possible state x_t. x_(t-1) is dependent on x_t, however, even when x_(t-1) is observed, x_t is not certain, since there can be different x_t leading to the same x_(t-1).

Hope it helps with your understanding.

Exercises

1.1 I tried adding tau till 200 but I could not get a good model to be honest.

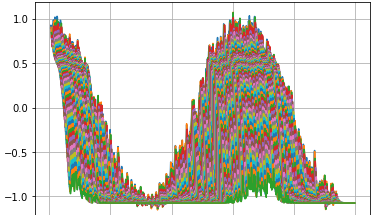

2. This is just like k step ahead prediction. For short term, it might give good result but it will be bad for long term prediction using the current data as the error will pile up.

3. We can predict in some instance what the next word will be. And in some other cases, we can come up with a range of word which will follow, so yes causality can be somewhat applied in text

4. We can latent autoregressive model when the features is too big and in that case, latent autoregressive models will be useful.

About regression errors piling up, the book says that “We will discuss methods for improving this throughout this chapter and beyond.”

All the chapter seems devoted to text processing models. To find solutions that work well with “k-step-ahead predictions”, do I have to skip ahead to LSTM networks?

Exercises and my stupid answers

Improve the model in the experiment of this section.

-Incorporate more than the past 4 observations? How many do you really need?

(Tried. in a limited way. close to one seems better.)

)

- How many past observations would you need if there was no noise? Hint: you can write sin and cos as a differential equation.

(I tried with sin and cos.Its the same story. `x = torch.cos(time * 0.01)`)

- Can you incorporate older observations while keeping the total number of features constant? Does this improve accuracy? Why?

(Dont get the question)

Change the neural network architecture and evaluate the performance.

(tried. same)

An investor wants to find a good security to buy. He looks at past returns to decide which one is likely to do well. What could possibly go wrong with this strategy?

(Only things constant in life is change)

Does causality also apply to text? To which extent?

(The words do have some causality I believe, you can expect h to follow W at the start of a sentence with a relatively high degree of confidence. But its very topical)

Give an example for when a latent autoregressive model might be needed to capture the dynamic of the data.

(In stock market!)

Also I wanted to ask that

While we do the prediction here

for i in range(n_train+tau , T):

# predicting simply based on past 4 predictions

multistep_preds[i] = net(multistep_preds[i-tau:i].reshape((-1,1))

it does nt work until i do this,

for i in range(n_train+tau , T):

# predicting simply based on past 4 predictions

multistep_preds[i] = net(multistep_preds[i-tau:i].reshape((-1,1)).squeeze(1))

I might be doing something wrong perhaps, but does this need a pull request

Here is th ereference notebook: https://www.kaggle.com/fanbyprinciple/sequence-prediction-with-autoregressive-model/

Thanks for the kaggle notebook which I didn’t know, and which implements the same code of the book, with these improvements:

-

incorporate more than the past 4 observations? → almost no difference

-

remove noise → same problems

So it is a problem with this architecture, and rnn. Probably I should finish the chapter, even if it isn’t about regression, and try to adapt it to regression, and then do the same with chapter 9 :-/ very long task

Thank you Gianni. Its my notebook I implemented. All the best for completing the chapter!

do LSTM models solve some of the problems of my previous question?