https://d2l.ai/chapter_generative-adversarial-networks/dcgan.html

PyTorch version: http://preview.d2l.ai/d2l-en/master/chapter_generative-adversarial-networks/dcgan.html

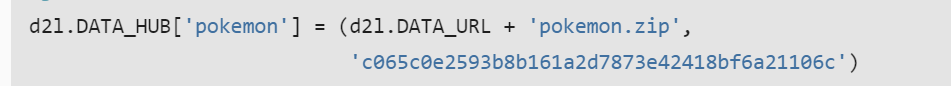

I noticed that c065c0e2593b8b161a2d7873e42418bf6a21106c in

And all DATA_HUB have such numbers.

Where did these numbers come from? @goldpiggy

How can I produce these numbers

Hey @StevenJokes, great question! This is the hash code for the dataset. Please see more details here.

(BTW the pokemon dataset will be deprecated in the future because of legal constraints. :()

hashlib.sha1() SHA1 hash. I got it .hashlib

read file:

data = f.read(1048576) // MAX rows in EXCEL

if not data: // test if file is empty

break

1,048,576 rows—— a string (in text mode)= 2^20 rows = 2M rows

After 2007, EXCEL have 1,048,576 rows and end at XFD.

Problem: Excel CSV. file with more than 1,048,576 rows of data

How to fix it: Lazy Method for Reading Big File in Python?

Why 1,048,576 rows?:haven’t found yet.

fp.extractall(base_dir) //extract file

os.path.join(base_dir, folder) if folder else data_dir

add folder or data_dir to current path?

I noticed that BASE_DIR is used more fruently. Maybe we should change to it?

Despite being named Pokémon Database, currently we do not offer any database or API (Application Programming Interface) for use, though it may be something we offer in the future.

It should be noted here that we are unable to make a Pokémon app as Nintendo gets them taken down from all app stores.

Download:https://peltarion.com/knowledge-center/documentation/terms/dataset-licenses/pokemon-images

I noticed it is CC-BY 4.0, or was?. Where is the legal constraints?

I look all dataset legal constraints searched, and I didn’t find any information about legal constraints of dataset. ![]() How can I confirm whether a dataset can be used for public.

How can I confirm whether a dataset can be used for public.

conjuct:

separate:

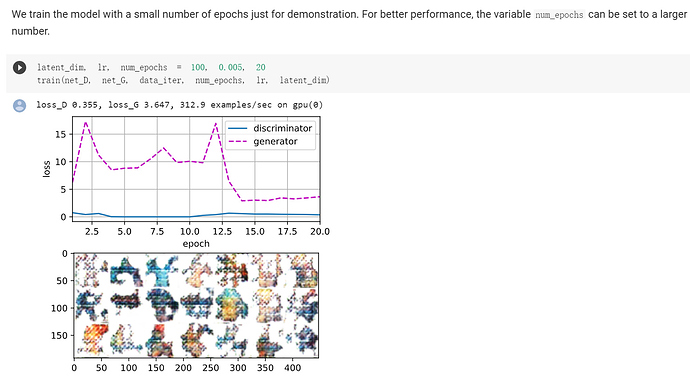

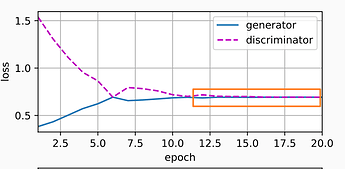

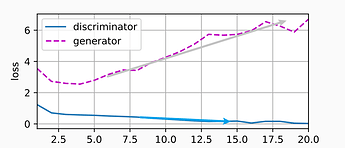

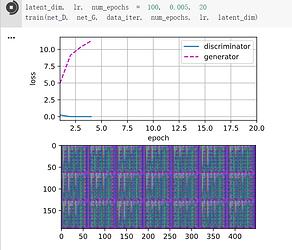

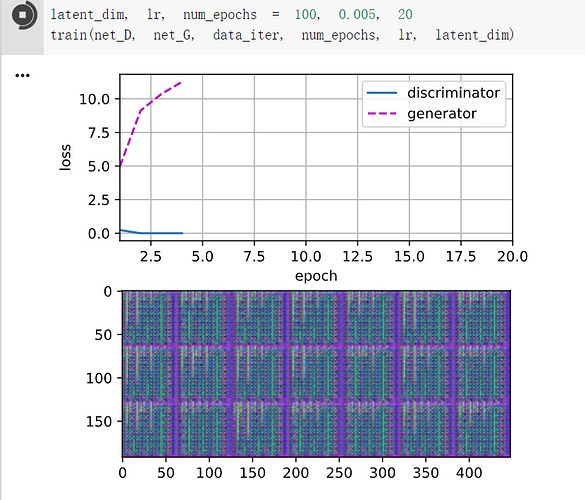

Why didn’t the discriminator loss go up and the generator go down?

Why isn’t same as the 17.1 losses’ going together finally?

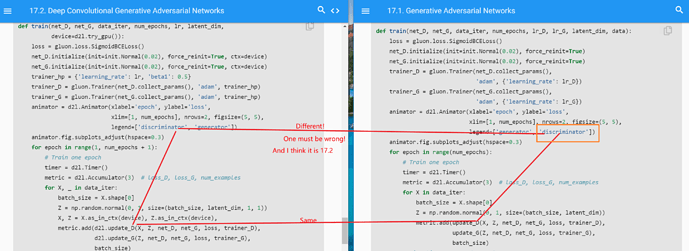

I think there is a bug:

After switching,

issue: https://github.com/d2l-ai/d2l-en/issues/1326

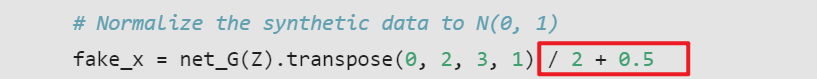

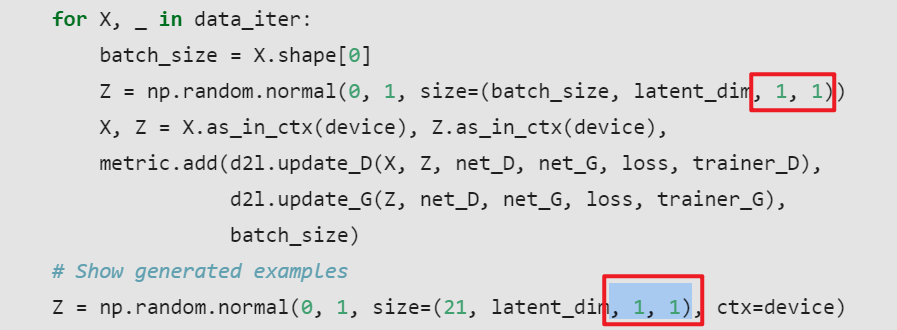

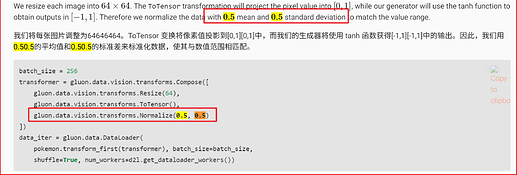

We stated that " we normalize the data with 0.5 mean and 0.5 standard deviation to match the value range" in the earlier text.

Why do we need

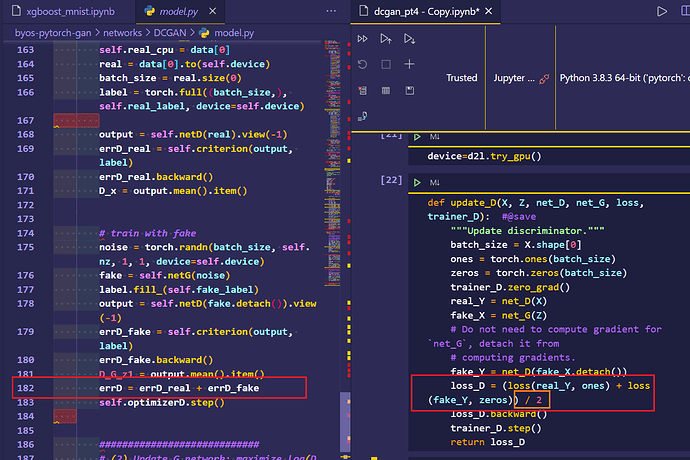

/2?The code I’m contrasting with is from https://aws.amazon.com/cn/blogs/china/easily-build-pytorch-generative-adversarial-networks-gan/

loss_real为真实样本的鉴别器损失(附加其计算图),loss_fake为虚假样本的鉴别器损失(及计算图)。PyTorch能够使用+运算符将这些图形组合成一个计算图形。然后我们将反向传播和参数更新应用到组合计算图。

https://blog.csdn.net/m0_46510245/article/details/107741609

I have translated this code to PyTorch, and it runs without crashing, but the network fails to train (both losses go way above 1, and the image is garbage).

Can you take a look at this file and see if there are any obvious mistakes?

Thank you!

For those who were confused like me about the figure discussion, it ended up being Section 17.1 that had the legend backwards rather than Section 17.2. But both sections are now correct in the main text.

OK. I’ll learn.

What is “it” you talked about?

Do you have ipynb file? It is easy to watch the result of every step.

I tried to translate but failed. You can take a look at this PR.

https://github.com/d2l-ai/d2l-en/pull/1309

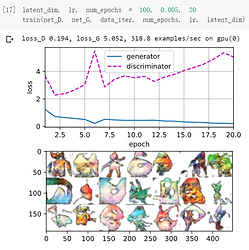

epoch is too small?

Why so slow?

You need to train more epochs by GPU.

It is quicker.

And thanks for your code BTW.

I think I already figure DCGAN out.

Thank you! I found your ipynb notebook and converted it to a .py file. It is working great. I hope to make a cleaned up version of my file (I updated mine on the link I sent earlier to include GPU, and I think some of my arguments are named more cleanly) that still produces good results. This could be days or months before that happens, though.

What is “it” you talked about?

I’ve updated my post to include a link to the post I was following. For some reason, the software does not make it clear what you are linking to.

I don’t have Jupyter Notebook installed on my local machine – perhaps I should install that!

I’m trying to prepare for a pull request myself, and I’m running with GPU. I’ve updated the code I linked earlier (sorry, ran out of links for this post) to include GPU support. I followed d2l.ai’s chapter on GPUs in doing this.

(And now, a reply to the post right before this one):

Can you share the link to the code you used to create this figure? I suspect it is your code (not mine), but I’m confused as to why your code stops after a few iterations. By the way, I really like your GPU code. It is cleaner than mine. I’m going to try to make a clean version of my code that borrows heavily from yours.

It was my code I forget to add

The code didn’t not stop.

Only running. I’m so excited to tell you and thanks for your code too.

Now you can check http://preview.d2l.ai/d2l-en/PR-1309/chapter_generative-adversarial-networks/dcgan.html

It runs well.

@yoderj

If you like to research, my GAN repo which records my all code have tried:

https://github.com/StevenJokes/d2l-en-read/tree/master/chapter-generative-adversarial-networks

Free to share with you.

And I created a discussion for DCGAN pytorch.

We can talk more about the code there.

Deep Convolutional Generative Adversarial Networks