https://d2l.ai/chapter_attention-mechanisms-and-transformers/attention-scoring-functions.html

For the batched dot product I don’t understand why we divide by sqrt(d) instead of d. In my eyes d perfectly takes out the problem of higher dimensions blowing up the dot product and the square root adds needless complexity. However, the first intuition is to use d, so there must be a reason for the sqrt. What am I missing here?

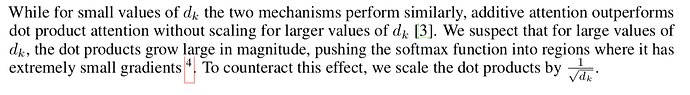

Hi @Van_Tran, great question! Please refer to the original paper section 3.2.1:

The reason we don’t use d is similar, a large d may lead to extremely small gradients.

It is really useful. Will there be tensorflow implementation for the remaining topics?.

Is this correct in PyTorch MLPAttention?

query, key = self.W_k(query), self.W_q(key)

I think it should be

query, key = self.W_q(query), self.W_k(key)

Thank you so much for the effort!

Hi @Van_Tran! Let’s call the batched dot product X. As mentioned in the text, Var(X)=d. In order to reduce the variance to 1, you have to divide X by sqrt(d), since Var(aX) = a^2 * Var(X). If you divided X by d, the variance of the result would be 1/sqrt(d), which can be too small for large values of d.

Why not add a bias term when calculating attention score ? Anyone know ?

@zophy I guess because we don’t need our scores to be shifted with biases if keys and queries are close to zero.